On AI

About my AI anxiety

2022 - A friend sends me a link and says just try it. I type something mundane into this chat interface I’ve never seen before, and it comes back with this articulate, structured, coherent response. I sit there staring at my screen for a second. My first reaction is something between amazement and mild dread, like watching a magic trick I couldn’t figure out.

This is insane.

I show a few people that same night. Everyone has the same reaction. We laugh about it, mess around with it for a bit, and move on with our lives. It’s a cool thing. A novelty.

That was four years ago. I think about that moment a lot lately. Not because it was particularly significant at the time, but because of how much has changed since, and how fast. And because I’m not sure I fully processed what I was actually looking at that night.

chat.openai.com

I scroll Twitter and LinkedIn too much. And my feed has been overrun by two types of posts.

The first is the startup bro variety. YC founders and a16z investors talking about how AI is going to change everything, how legacy companies are already dead and just don’t know it yet, how if you’re not building with AI you’re already irrelevant. These posts usually come with a 47-tweet thread that opens with “I’ve been thinking a lot lately...”

The second type is the doomer variety. Long anxious essays about how we’re sleepwalking into catastrophe. How the jobs aren’t coming back. How society as we know it is cooked.

I’ve been rolling my eyes at both for a while. But recently I’ve been taking the time to actually read them. And I’m not sure how to feel.

All this discourse reminds me of the climate anxiety phase my generation went through five or ten years ago. Back then I kept seeing people genuinely freaking out: questioning whether to have kids, whether to bother saving for retirement, whether any long-term plan made sense. The anxiety felt overblown to me. Performative. I laughed it off. Not because I didn’t believe climate change was real, but because the panic felt out of proportion to anything I could see directly in front of me.

I can’t do the same thing with AI. And I think the reason is that this time, I can see it happening.

I’m in my final year of a business degree. And lately I’ve been sitting in lectures thinking about whether any of this will matter in two years.

Not in the abstract “will my degree be useful” way that every student worries about. In a much more specific way. I’ll be in a finance class working through a model and think: Claude can do this. Faster than me, cleaner than me, probably with fewer errors. I’ll be in a group presentation and think: if Claude can synthesize this material and structure an argument better than I can, why does my ability to stand at the front of a room and explain it matter? Presenting has always been treated as the safe, irreducibly human part of knowledge work. Eye contact. Reading the room. Fielding questions. But if the underlying thinking and structure and content can all be done better by a machine, what exactly am I bringing?

Anthropic (the company that makes Claude) recently published a paper on AI and the labor market. They built something called an observed exposure measure, which tracks not just what AI could theoretically do but what it’s actually being used for in professional settings right now. The headline finding is that the gap between AI’s capability and actual adoption is still enormous. In computer and math roles, AI could theoretically handle 94% of tasks. In practice it’s covering about 33%. Long way to go.

That sounds reassuring. In some ways it is.

But here’s the part that got me. Among workers aged 22 to 25, hiring in AI-exposed roles has already dropped roughly 14% compared to pre-ChatGPT levels. The door is quietly closing on my generation, and AI hasn’t even approached its theoretical ceiling yet.

Jobs with higher AI exposure are also projected to grow more slowly over the next decade. And the paper explicitly names the scenario they’re watching for: a “Great Recession for white-collar workers,” where unemployment in some occupations doubles from 3% to 6%.

I know the counterarguments to my worry. AI can’t make judgment calls. It doesn’t understand relationships. People want the human touch. You need someone who can read the room. I think that’s all true right now. But my mind keeps going back to the same question: is it true because humans are actually better at those things, or just because we’re used to humans doing them? Is this just a form of status quo bias that probably won’t last? If the AI is really that much better than a human, who cares if it has emotions? Maybe in the future, the results AI produces speak for themselves, and everything else will fall into line around that.

There’s a concept in philosophy called “god of the gaps.” As science explains more of the world, religious explanations get pushed into whatever corners science hasn’t reached yet. The divine retreats into the gaps. And the gaps keep shrinking. (For the record I think this is a flawed argument, but bear with me).

I think about this constantly in relation to the “humans are irreplaceable” argument. Every time someone makes that case, they point to whatever AI hasn’t figured out yet. Five years ago it couldn’t write coherently. Then it could. Couldn’t code. Then it could. Couldn’t reason through complex problems. Now that’s genuinely debatable. Each time a gap closes, the argument shifts to the next one. Judgment. Relationships. Emotional intelligence. Creativity.

Maybe those gaps never fully close. Probably they don’t. But I keep asking a different question: even if there’s always something humans do better, are those remaining gaps enough to build a career on? “Humans of the gaps” - forever retreating to whatever corner hasn’t been automated yet - is that really the future we are destined towards?

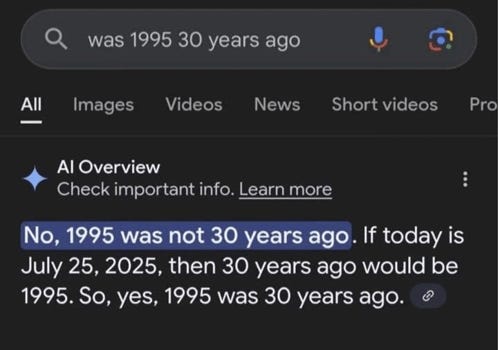

Not long ago I was trying to get Claude to help me with something simple in a spreadsheet. I explained what I wanted. It gave me something that looked right. I ran it. Didn’t work. I told it what went wrong. It apologized with the classic “Oh, you’re right!” catchphrase that’s become symbolic of AI, re-explained its reasoning, gave me a revised version. Also didn’t work. I rephrased the problem three different ways. Same result, different packaging each time. After forty minutes of this I gave up and figured it out myself in ten minutes.

This wasn’t a one-off. Anyone who actually uses these tools will tell you the same thing. There’s a persistent gap between how AI performs in a polished demo and how it behaves when you’re in the weeds of a real, slightly messy problem. It hallucinates confidently. It apologizes and then makes the same mistake. It goes in circles without flagging that it’s stuck.

So maybe the “not reliable enough” argument holds. Maybe AI never crosses the threshold that causes real mass disruption. Maybe the demos are always better than the reality.

Except I already thought that once. Four years ago I would not have predicted where we are now. In 2022 this was a party trick that made me and my friends go “this is insane,” then move on with our lives. Now it’s a workforce strategy being discussed in boardrooms.

The other classic counter-argument to all of this is that human desires are unlimited. So as AI fills some of them and some jobs are lost, new ones will be created. Every wave of automation has eventually created more than it destroyed. ATMs didn’t kill bank tellers. Computers didn’t eliminate office workers. New industries emerged, employment held up. Real historical pattern.

But this argument operates at a level of abstraction that doesn’t actually answer my question. Yes, on a long enough timeline the economy adapts. But the transition period is not nothing. People lose careers in the gaps. And the new jobs don’t automatically go to the people whose old ones disappeared. A 45-year-old lawyer replaced by AI is not seamlessly pivoting to whatever the new economy needs. “The economy creates new jobs eventually” is cold comfort if you’re the one falling through the gap in the meantime.

My worry isn’t really about whether new jobs exist. It’s about whether I’ll have the skills for them. And that uncertainty just sits there.

The economics of the AI industry are also worth flagging, because they don’t make sense. These companies are burning enormous amounts of capital. Compute costs are massive. None of the major labs are profitable. Pricing right now is heavily subsidized. What happens to “AI is cheaper than humans” when the models are actually priced to cover their costs? And when the market is so monopolistic that firms can charge essentially whatever they want? I don’t know.

Then there’s the social side.

If you’re as chronically online as I am, you’ll get what I mean. Cluely is the most well known example. They built a tool that sits on your screen during job interviews and feeds you answers in real time. They launched it by filming themselves getting kicked out of Y Combinator demo day for using it to cheat, then posted the video as marketing. The pitch was essentially: integrity is for people who can’t get away with ignoring it.

You could write that off as one bad actor. But the texture of it: shamelessness dressed up as disruption, edginess as a substitute for substance, it shows up everywhere. The LinkedIn posts announcing an AI-native something-company that’s just a GPT wrapper with the energy of someone who has just achieved immortality. The YC batches where every third company is applying AI to an industry they don’t know anything about. The a16z content machine, which at this point functions less like a venture fund and more like a media operation for a vision of the future that happens to be very convenient for a16z.

The people going into this space are genuinely geniuses. And a lot of them are building ChatGPT wrappers with a nice landing page, or becoming what, to borrow a phrase I saw on Twitter, influencers cosplaying as founders. Which, fine. People follow incentives, and the incentives right now point hard in this direction. But you look at the aggregate of that talent and you wonder what else it could be pointed at. The same person spending two years building sperm racing could be working on public health systems, or education infrastructure, or climate technology - problems that are deeply important and chronically underfunded precisely because they don’t have a clear path to a $100M Series B.

As a society, we’re pulling a generation of capable, motivated people toward a very narrow set of problems, because that’s where the attention is. And attention is a powerful recruiting tool. Before you point it out, I see the irony in myself saying this.

Some days I think maybe AI really is the most important problem to work on right now. Maybe concentrating talent here makes sense. I genuinely don’t know. The pull is just there, people follow it, and the opportunity cost is invisible so it doesn’t get discussed.

I don’t know what any of this means for me, or for my generation. And I’m not sure writing this piece has helped me figure it out. If anything, it’s made the uncertainty feel more concrete, which I’m not sure is better.

I’ve been trying my best to stay with the times. Using Cursor to build things despite having no programming background. Making myself actually use the tools rather than just reading hot takes about them. In fact, Claude helped me with a lot of this piece. It made the writing process much easier. It also made the uncertainty that much worse. There’s something strange about using the thing you’re worried about to articulate why you’re worried about it.

The range of confident opinion out there is wild. Some people think this is civilizational-level disruption: UBI is inevitable, the social contract needs rethinking, Andrew Yang was ahead of his time. Others say it’s a tool, relax, the economy always figures it out. Both camps say their thing with identical confidence. Both cite data. I have no idea who to believe, and I’m increasingly suspicious of anyone who seems sure. The certainty itself feels like a tell. Nobody actually knows how this ends.

What I keep landing on is this: the anxiety I feel about AI is not irrational. The Anthropic paper found signals of disruption in the hiring patterns of people my age, and that’s coming from a company with every incentive to say everything is fine.

I think about that night in 2022 a lot. Sitting there staring at my screen, feeling something I couldn’t quite name. I still can’t quite name it. It was something close to the feeling you get when you realize a situation has already changed and you’re only just catching up to it. Like the thing you were looking at had been growing in the dark for a while, and the link my friend sent me was the first time someone turned a light on.

Four years later I’m still not sure what to do with that feeling. But I’m pretty sure ignoring it isn’t the answer.

The thing that stays with me from this piece is the honesty of not knowing. In a landscape where everyone claims certainty—either utopia or apocalypse—you're sitting with the ambiguity. That takes more intellectual courage than most of the takes out there.

Your "humans of the gaps" framing is sharp—I've been circling the same thought but couldn't name it. The retreat keeps happening: first it was "AI can't write," then "can't code," now we're down to "judgment" and "emotional intelligence." Each time the gap closes, the argument shifts.

What struck me most: you used Claude to write about worrying about Claude. That recursive unease feels like the real story. We're already inside the transition, using the tools to process what the tools mean. The irony isn't lost on you, and that self-awareness makes this piece land harder than most AI anxiety essays I've read.

The hiring data for 22-25 year-olds is the part I can't shake. "The door is quietly closing on my generation"—that line will stay with me.